‘Entropy and the Internet of Useful Things’

Given at South by Southwest Interactive in Austin on March 8th 2015.

Hi I’m Ross Atkin. I’m a product designer and researcher and this talk is called Entropy and the internet of useful things. It’s about how the principles of entropy and the second law of thermodynamics can be applied to understand the connected devices landscape. How this understanding can be used to identify opportunities for useful and meaningful new products.

The conceptual basis for this talk is taken from my masters project at the Royal College of Art which I did five years ago but most of the examples are bang up to date. I’m going to include some examples from my own work and some from others. We’re going to cover some fairly abstract stuff at the beginning but bear with me and I promise we’ll land somewhere useful!

So what’s wrong with the Internet of Things? I was going to present a series of ridiculous fictional products but I realised they weren’t actually more ridiculous than some of the stuff coming on the market so I drew this.

I think it sums up where we currently are.

As I’m a researcher and user-centred designer I could just say we’re here because technologists aren’t taking a people-centric approach. Aren’t researching and responding to real problems. Aren’t connecting with human needs and desires beyond pure vanity.

All of this is true but I didn’t think it would make such an interesting talk. This talk is going to contain plenty of human-centric pieces of technology. But it will develop some underlying principles, that can be applied beyond the context of a particular product. Principles that can be applied when envisioning how internet of things technology will change the way we live.

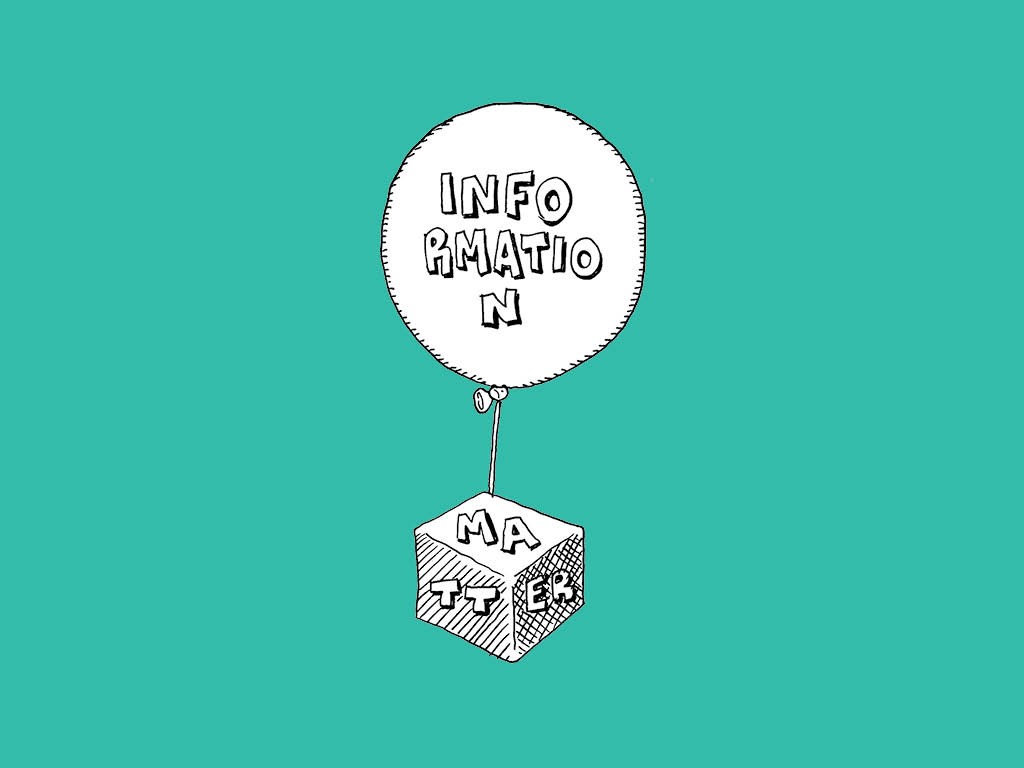

Entropy and the second law of thermodynamics is relevant here because it applies to the relationship between matter and information.

The basket of technologies that constitutes the Internet of Things is our first opportunity to seriously challenge this division.

To bind information much more closely to matter.

The interaction of information and matter is governed by the second law of thermodynamics, the law of Entropy.

This started off as a preoccupation of some engineers trying to solve the prosaic problem of making steam engines more efficient. It ended up as the most profound and widely applicable idea in science.

An idea that explained why we experience time itself.

Einstein said of it:

The really applicable bits of the story are the ways nature gets around the second law, but we’ll get to that. Before that I want to tell you a story involving some men with really exceptional facial hair.

And before that we should probably say what entropy is

It’s a scientific measure of disorder.

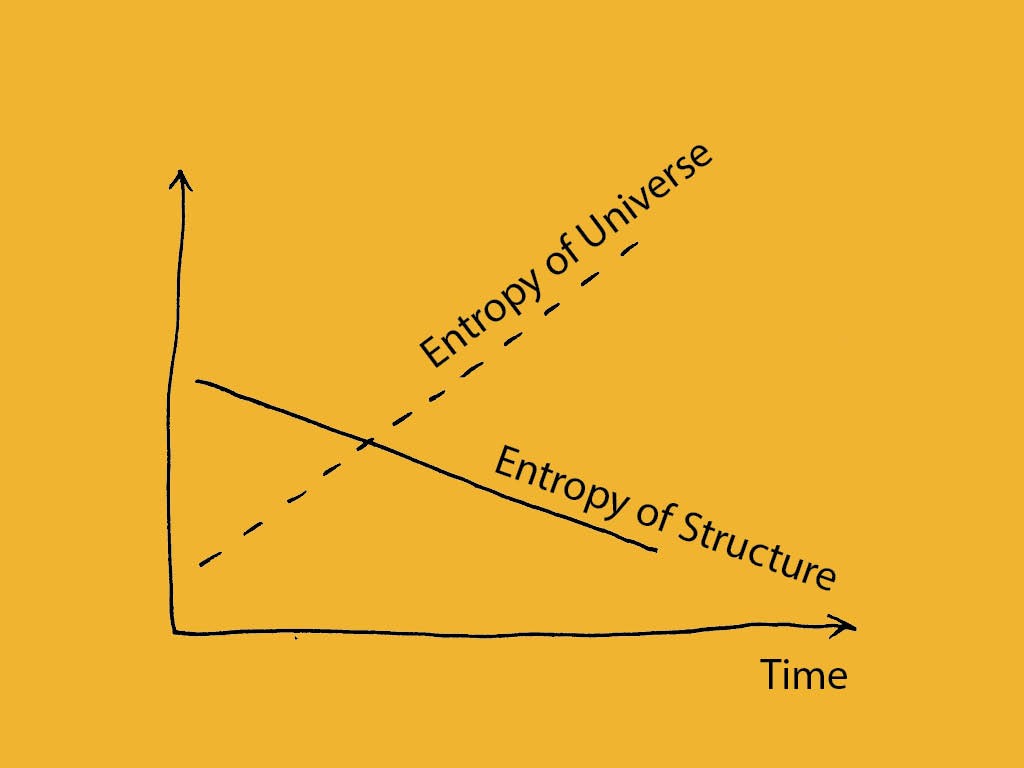

And the second law of thermodynamics says that the Entropy of the universe as a whole always increases.

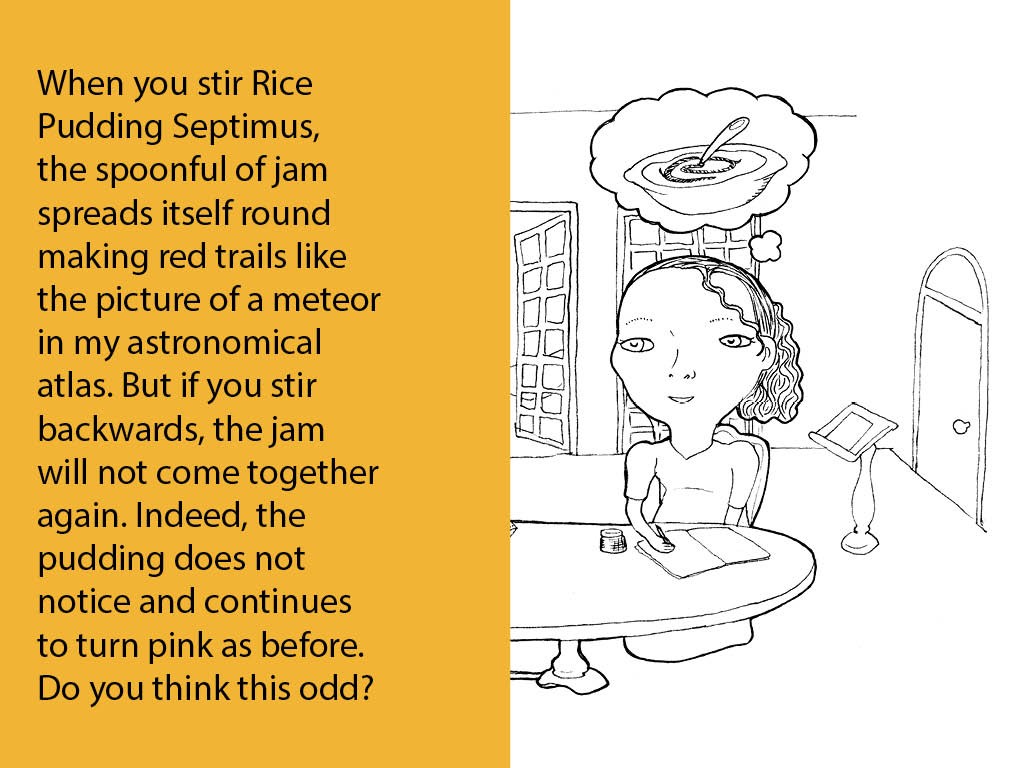

I before we get into the engineers and physycists explanations of it here’s one from a playwright. In the first scene of Arcadia by Tom Stoppard Thomasina and Septimus have this conversation.

I like this explanation becuse it explains the second law in a nice and easy to visualise way. You can not un-mix the rice pudding even if you stir backwards. And it captures the relationship between entropy and time.

I thought I’d illustrate this relationship with this video. Before the second law of thermodynamics was discovered in middle of the 1800s physics was symmetrical. If a planet is orbiting one way or the other it doesn’t affect Newtonian mechanics. The second law introduced the the idea of irreversible processes and the arrow of time and to physics. In this video we observe film of a newtonian process playing backwards and forwards. We can’t tell which film is backwards because there is no change of entropy. In the second clip the entropy change makes it clear which way time is running.

Anyway I promised you beards so let’s get on to them. Though we start with a beardless man who died of cholera when he was 36.

He was called Nicholas Sadi Carnot and was a french engineer. He was exploring why steam engines could only be made so efficient and published a study with the awesome title Reflections on the Motive Power of Fire.

This contained a limitation on the efficiency of an engine which was independent of its design and depended only on the temperatures of the hot part and the cold part. It also introduced the idea that all natural processes were irreversible.

His work was roundly ignored by the world until it was picked up a quarter century later by this crowd who were the first to articulate what we now consider to be the laws of thermodynamics.

They had some different ways of saying it but basically the first law says

Energy is always conserved

And the second law,

All energy is not equal and the quality of energy always reduces

Because they were primarily concerned with heat and engines these guys liked talk about heat energy and work energy, with work energy having a higher quality than heat energy. Entropy described the degree of imperfection of a quantity of energy.

For entropy to be applied beyond engines we have to fast-forward another quarter century, by which time the locomotive engines looked like this

And this guy Maxwell had developed the theory of gasses as particles bouncing around with randomly distributed speeds.

According to this theory the particles in a hot gas had a higher average speed than the particles in a cold gas giving rise to temperature.

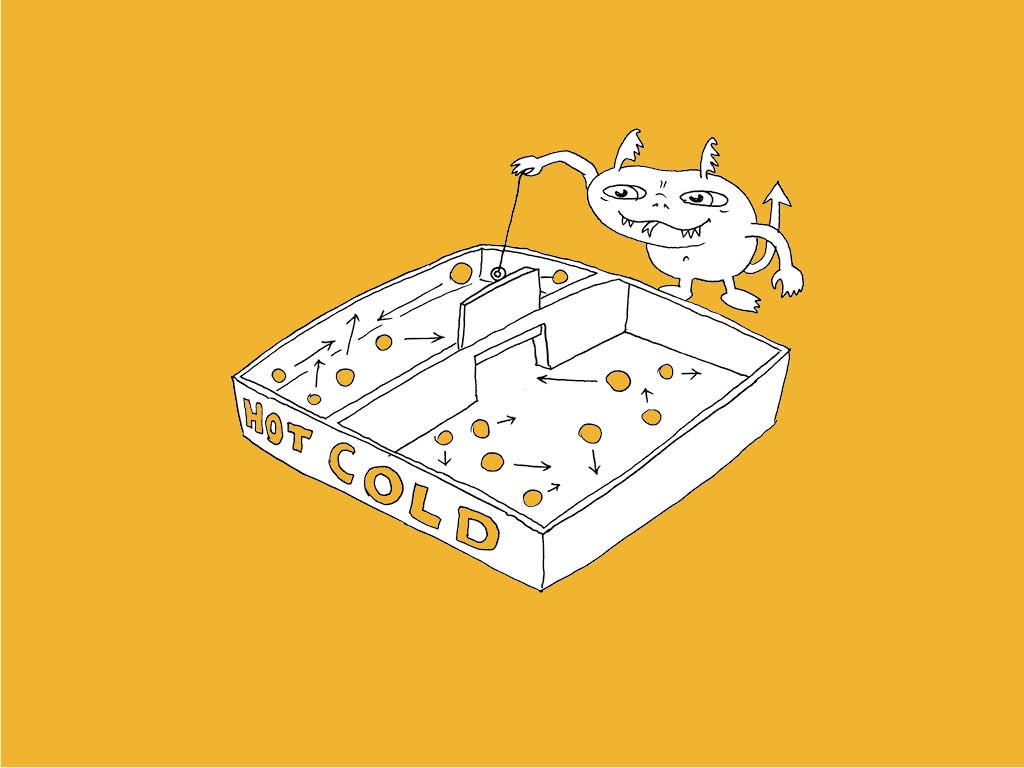

Maxwell proposed a situation in which the second law of thermodynamics could be violated. It involved a demon, a creature so small that it could see the individual particles of a gas. This demon would be in command of a tiny trap door between two chambers, one containing a hot gas and one a colder one. By watching out for an unusually fast particle on the cold side and opening the door when it approached, or vice-versa for a slow particle on the hot side the demon could cause energy to pass from cold to hot violating the second law.

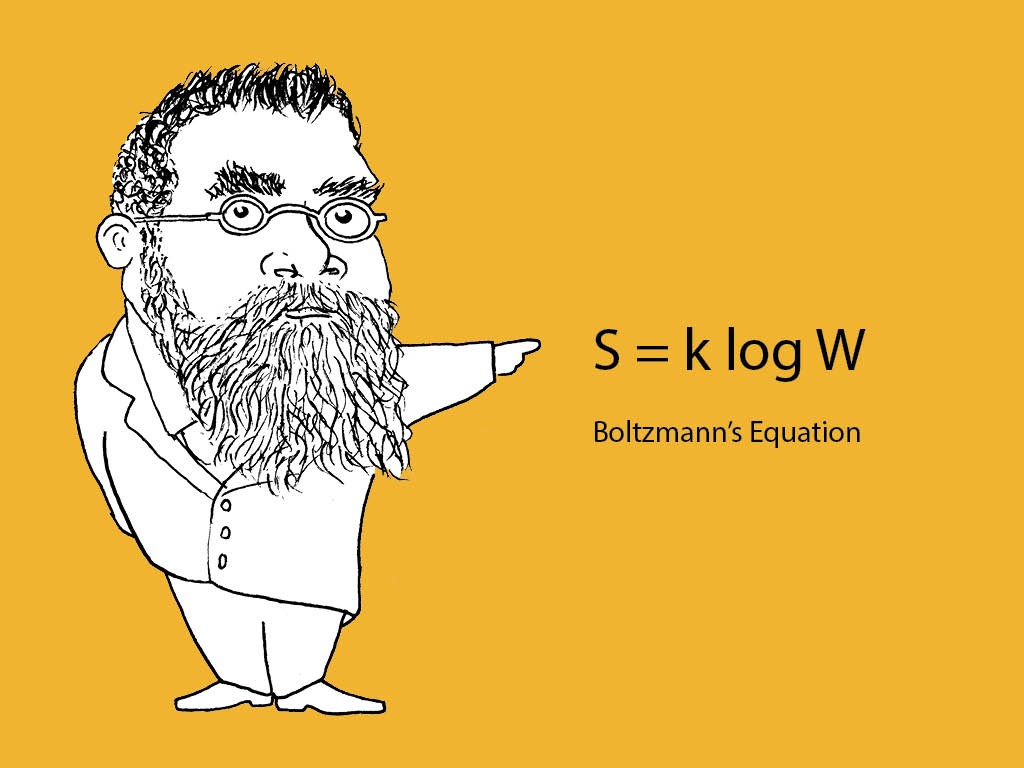

I’m going to ask you to wait a bit for the explanation of why the demon’s activity is limited and move on to probably the best beard of all, that of Lidwig Boltzmann. He was able to quantify Entropy based on the number of possible different configurations a set of particles can have with this equation.

It turned out so important they wrote it on his tomb.

It’s important to us because the configurations don’t just have to be of particles in a gas. The equation can apply to the configuration of anything.

This point is contentious but many feel the full generalisation of this principle came from Claude Shannon in his 1948 paper A mathematical theory of communication. I’m guessing that there will be some people in the crowd who are pretty familiar with Shannon’s concept of information entropy which imposes a limit on the amount a message or signal can be compressed without loss. It is basically a measure of the uncertainty in the message, or another way, the amount of information required to fully describe the configuration of the message.

If we think about the thermodynamic or chemical idea of entropy related to the dispersal of energy and particles we can view it as a special case of information entropy. Here the entropy is the information to completely describe the configuration of the particles, which increases as they disperse.

Returning to Maxwell’s Deamon…

an IBM researcher called Charles Bennett showed that as the daemon could not store an infinite amount of information it would have to throw information about older particle movements away in order to capture new ones. This process of throwing information away would increase the entropy of the universe.

So we have seen that entropy applies to everything down to the building blocks of matter, and that it is fundamentally an issue of information. This is where I picked up my research to see if the second law could be meaningfully applied in a design context.

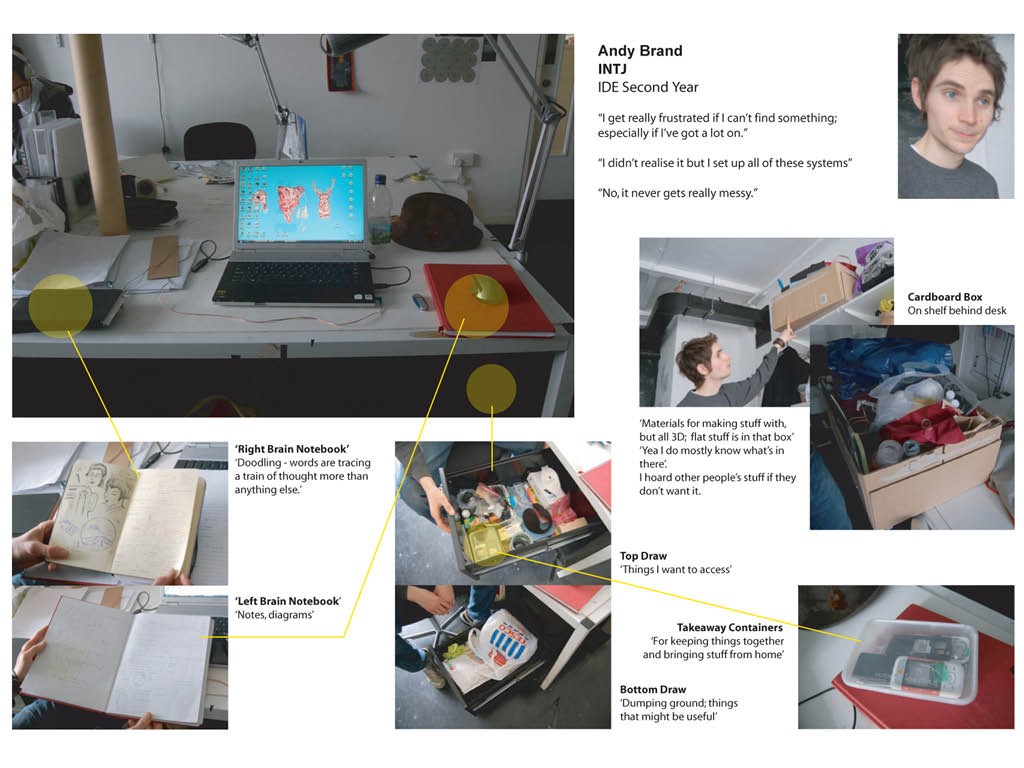

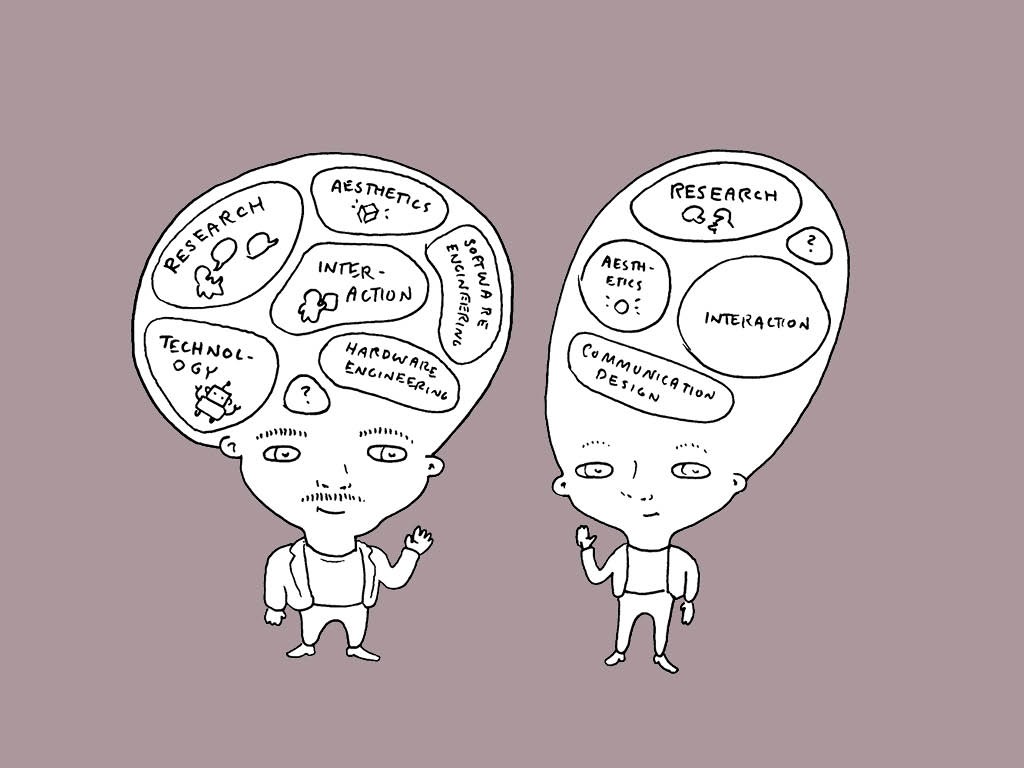

I was interested in how we manage the objects around us,

what constitutes order compared a mess or rubbish.

I interviewed my colleagues about their desks and the systems they used to organise their stuff.

I tried, without huge success, to quantify the entropy of a stream of rubbish or recycling.

I started to learn about what mess really was

My conclusions:

A messy looking desk is only actually a mess if it’s user doesn’t know where things are, which is often not the case – again here information is key.

An incredible illustration of this principle is this.

It looks unbelievably messy right?

Actually this is one of the most highly ordered (at least in terms of information) collections of objects in the world.

Does anyone know where it is?

It’s in the middle of a museum in Dublin.

And it’s the studio of Francis Bacon, as it was when he died. Originally it was in Kensington in London, around the corner from the Royal College of Art.

It took a team of archaeologists and conservators three years to document, enter into a database, move and re-arrange over seven thousand items down to tiny scraps of paper and bits of dust, exactly as it had been. That information in the database means that the configuration of these objects is more perfectly described than almost any other collection anywhere in the world.

I also created a new formulation of the second law:

Interesting as all of this was it wasn’t getting me any closer to potentially useful design outputs, and passing my MA.

Just knowing the inevitability that entropy always increases can leave you feeling a bit nihilistic.

Luckily thermodynamics had some more tricks to teach.

If the second law says that natural processes tend to increase disorder how come ordered structures

like crystals and trees

and tigers constantly emerge out of the mess of nature?

This conundrum is explained by a scientist called Ilya Prigogine. He recognised the contradiction.

He defined a new term to describe naturally organised structures like crystals and tigers thermodynamically – dissipative structures – these are

So basically as long as you have a flow of energy that is increasing the entropy of the universe as a whole you can use it to support the reduction of entropy of a particular aggregation of matter, for a while.

What’s interesting about this is the manner in which nature manages this spontaneous emergence of order. Perhaps unsurprisingly it’s all to do with information but what is important is the way nature deals with this information.

If we think about the simplest emergence of order in nature, crystallisation,

here’s not some instruction manual that explains how the molecules are supposed to fit together. Through their geometries and charges the information is coded into their form. Because of this they self-assemble correctly into ordered structures.

If we go up several orders of complexity we find living organisms. Here we find much larger stores of information, the plans for the organism, but stored inside each of its constituent elements, cells. This information is actually stored in really complicated crystal, DNA. Cellular reproduction begins with a crystallisation process.

This organising principle is in stark contrast to the way we humans tend to organise objects and information now. I’ve illustrated these two approaches here.

We have tended to rely on an object’s context to carry the information about it. That’s why we have to tidy up.

Nature doesn’t tidy up because it can always get at the information when it needs it, as it’s bound tightly to the matter.

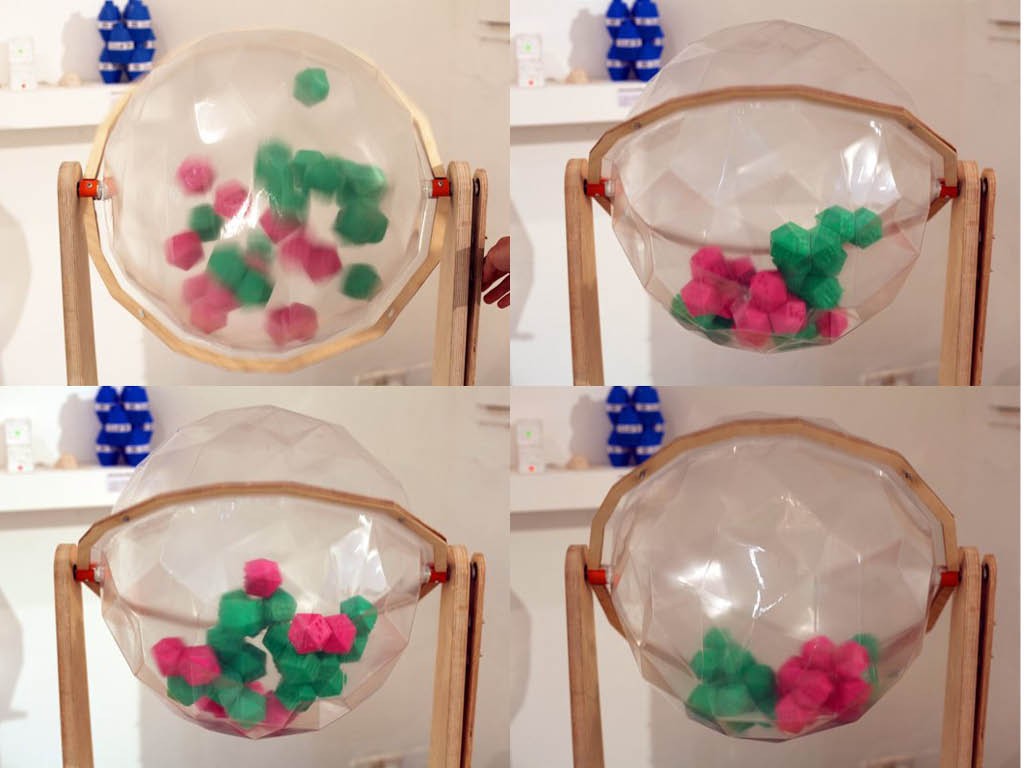

As part of my research I built two demonstrations of how we could apply the natural principle to human-scale objects.

I went through a process of developing physically self-organising objects, > and a series of entropy machines to test them in.

Like crystals these objects have information physically coded into them and when the machine ran at the right speed they would un-mix into two lumps.

I like these because their information content is explicitly part of their form but the principle doesn’t need to be so literally applied.

In response to my earlier research on desks I produced the MessSearch.

This made searching your physical desktop as easy as searching your computer.

Here the information was embedded in the objects using RFID and coded into a database on my PC.

Sorry about the music on this video. It was 2009 and royalty free music was hard to come by.

Thanks for hanging on in through all of that abstract theory!

I promised you lessons and examples and here they come.

The first one is that using the internet of things, and the ability to bind matter to information we can we can reorganise the world to be messier without paying a price!

One of my favourite recent internet of things startup is doing just that, updating the spirit of the MessSearch with the latest technology.

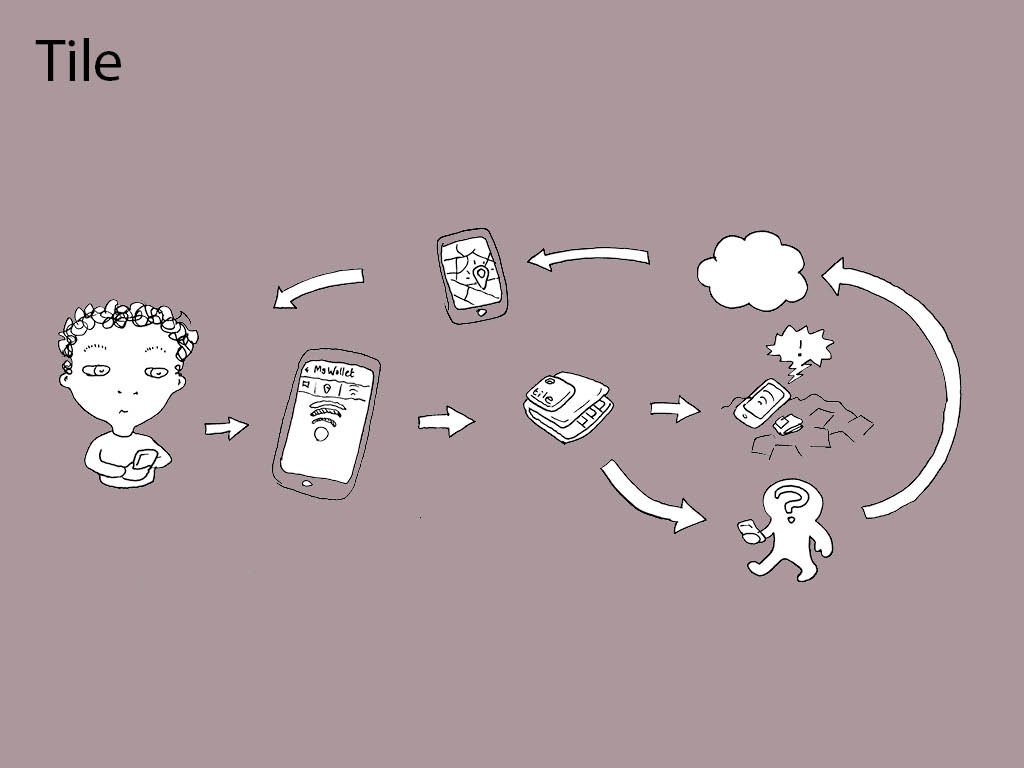

It’s Tile and it’s using blue-tooth low energy to do object finding using smartphones. > Because they use phones and their own location sensors you can locate one of your objects if it’s been detected by the phone of another user of the system. I hope they can achieve sufficient penetration that this feature really starts working.

It seems like with BTLE we have actually got a technology that works at a scale and power level that really fits our object world, so I’m really excited about what’s still to be done with it.

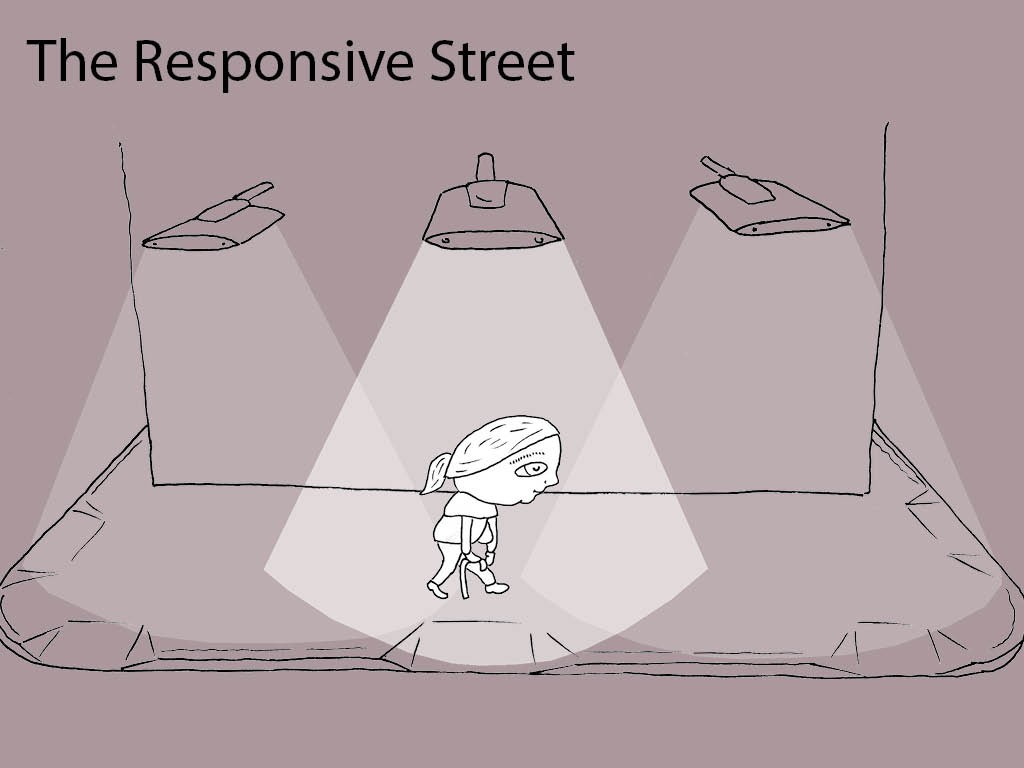

Another example of binding information to matter is a new project I am working on with a leading UK street furniture company. The impetus came from another area of interest for me accessibility and public space.

The problem here is that the design of a street is a compromise between the needs of different users.

I was interested in the idea of responsive web design, pushing a different version of a site to different people depending on their needs. I was wondering if the street could be responsive in the same way. Aware of who was using it and provide them with some of the affordances they need.

We’re currently building a proof of principle of the system to demo at some landscape events and hopefully we’ll be able to work it onto a live scheme in not too long.

The next lesson is one we can learn from the asymmetry that is intrinsic in the second law. Indeed yet another way of stating it is:

The lesson and opportunity for the internet of things is to capture information when it’s fresh. Bringing information out of the physical world and into the cloud as cleanly as possible should minimise it’s entropy, maximising the efficiency of the system.

What does this mean in practice?

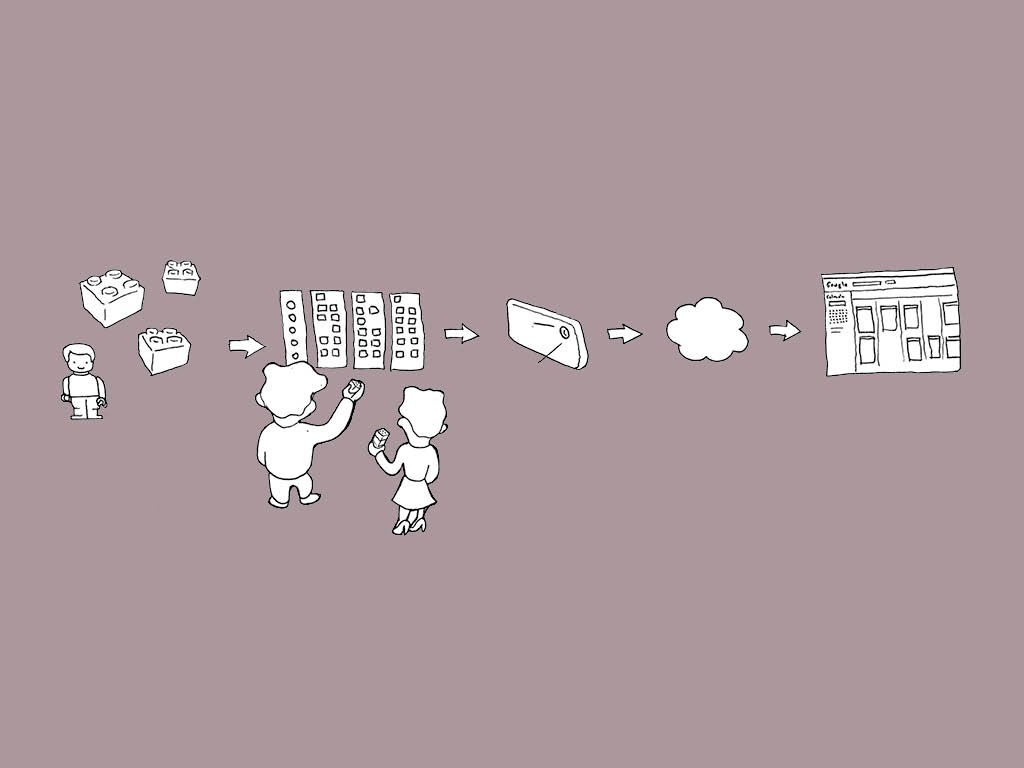

A really simple example of optimising a physical system to minimise the entropy of it’s transition to digital is the lego calendar by my friends Vitamins design.

They wanted to create a tangible physical calendar system that they could visualise and walk around in order to plan their projects and allocate time. But they needed it to be compatible with the digital calendar they all accessed when they were out of the studio.

They hit upon using lego. Firstly it is the right size and apportioning a stack of bricks is a really wonderfully tangible way to resource a project. More importantly in this context, because

the physical elements are square brightly coloured blocks, and so in terms of machine vision have a very low entropy, the digital calendar can easily be updated by e-mailing a photo of the lego version to the software.

Here’s a diagram of how their system works.

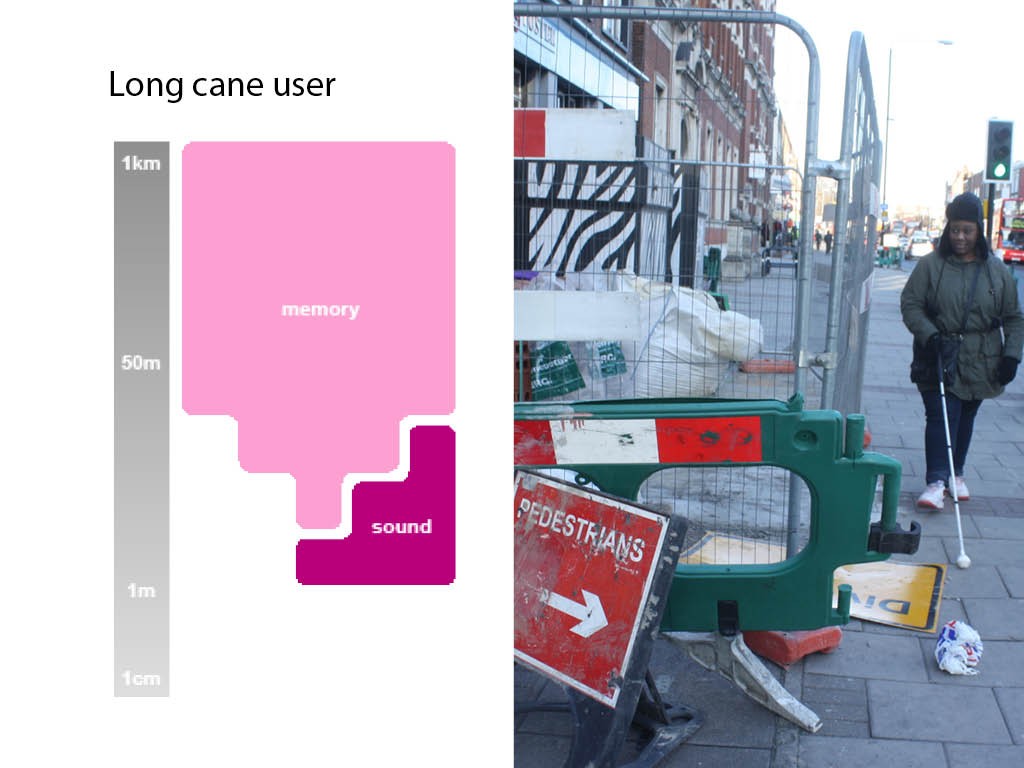

An example of this from my own work is the Sight Line project.

I was working with a visual impairment charity, the Royal London Society for Blind People, looking at how roadworks could be made less disruptive to people with sight loss.

This in itself if an entropy problem because people with visual impairments rely so significantly on their mental maps of an area to navigate. Unexpected disruptions or diversions can act as a significant source of entropy, making a mess of these mental maps.

What was required was an information source which could compensate for the navigational information that was destroyed by the roadworks.

I created a high-contrast visual and tactile coding system for the roadworks barriers which worked in conjunction with a re-designed sign to communicate the safe route through the works. However the bandwidth of such a system is very limited

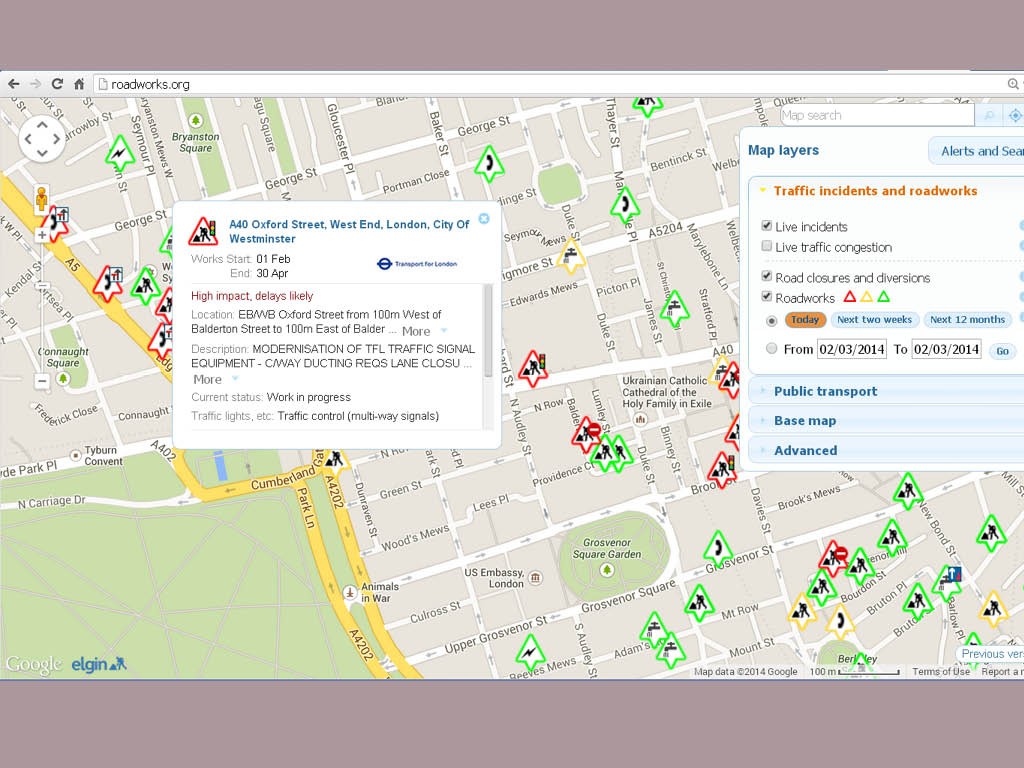

I realised that a digital layer was the way to communicate the more complex information, but where would it come from? There is already a digital registry of roadworks that contains the data used to give the operatives permission to dig somewhere. The problem with this data is it’s entropy is very high, written as it is by someone in a back office several weeks before the works actually happen.

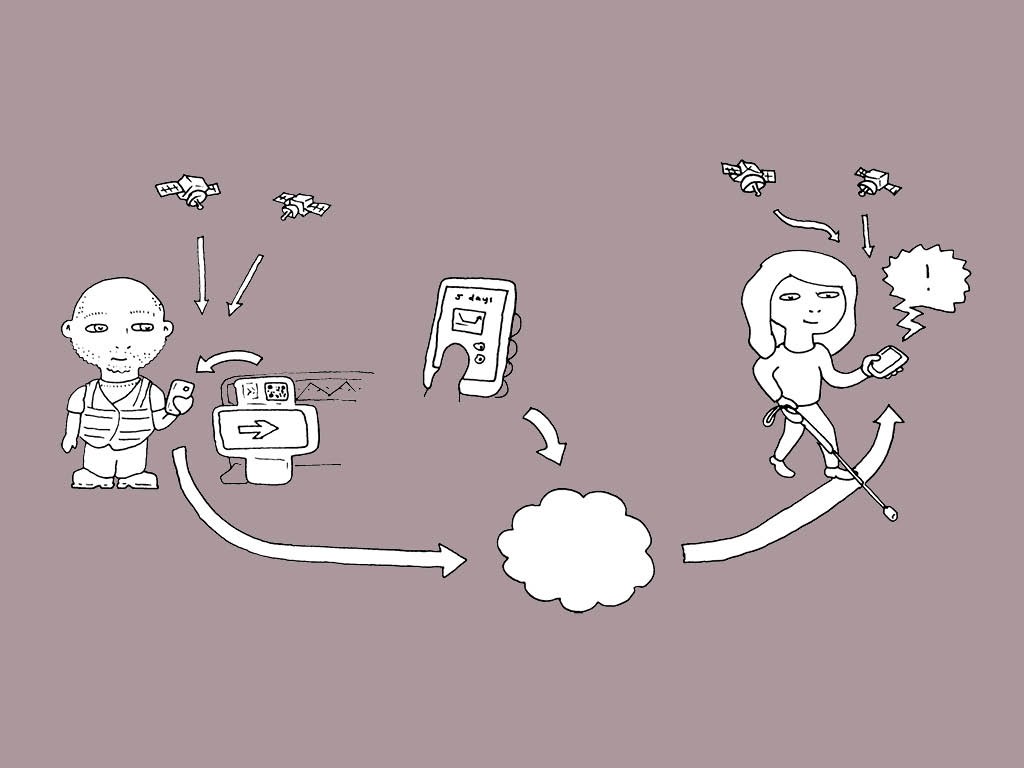

In the spirit of capturing information when it’s fresh the best time to gather this data is from the operatives who have just set out the signs and barriers. They know what they have just set up.

I created an app with simple checkboxes that generated a text description of the site which could be retrieved as large high contrast text or read aloud by the retrieval app, based on locational proximity. To minimise the entropy of the location data I put QR codes on the signs so that the operatives actually had to be standing in-front of them when logging the location.

Here’s the system diagram

The Nest thermostat also does a great job of squirreling away information captured at just the right moment. I’m sure you all know how it works, but by capturing the intentions of its users over time, right when it’s freshest – the point when they adjust it, it is able to build up a control program far more nuanced than anyone would be prepared to actually program.

So we get that picking up information from the physical world at the moment when it is freshest, cleanest and most unambiguous is a good way to make an effective internet of things system. But what if you can’t get information when it’s fresh and you are stuck with messy, high entropy information coming into your system? A lot of internet of things products, especially wearables find themselves in this situation. As the second law says you can’t improve the quality of the information you have. To do that time would have to go backwards

What you can do is add information from somewhere else.

A fantastic example of this is the good old Google Pagerank algorithm.

Again, I’m sure you all know this story but just in case someone doesn’t. Back in 1997 the information that was powering search engines result ranking was the number of times the searched term was mentioned on a page. This did not correlate particularly well to the page the user was probably looking for. As Brin and Page write in their original paper on Google

Their great insight was to utilise an entirely separate, and much higher quality store of information, the subjective judgement of everyone who had ever created a hyperlink. By using this to rank the list of pages indexed their entropy was able to be significantly reduced a fact acknowledged by Brin and Page in the title of their paper on PageRank “Bringing Order to the Web”.

With wearables like the Nike Fuel Band, FitBit and Jawbone UP the entropy of the information coming into the system is also quite high. Basically the system has one accelerometer trace coming in from one part of the body and then has to work out whether the user is walking, running, cycling, driving or just waving their wrist about.

Here the pool of additional information the systems rely on is datasets built up by the manufacturers carefully measuring lots of parameters of for lots of different people doing lots of different things with them. With access to this data to power their algorithms the systems are able to infer a surprising amount from that noisy accelerometer feed.

Try wearing devices from several manufacturers at the same time though and you’ll realise from the disparity in their results that they can’t all be doing a fantastic job.

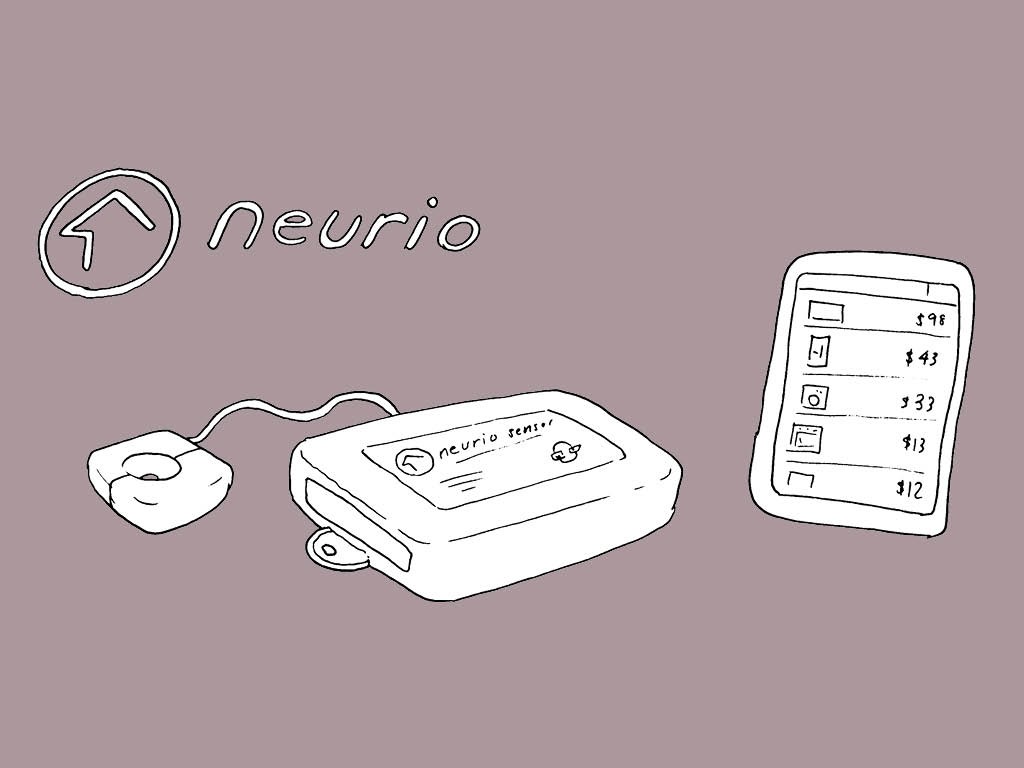

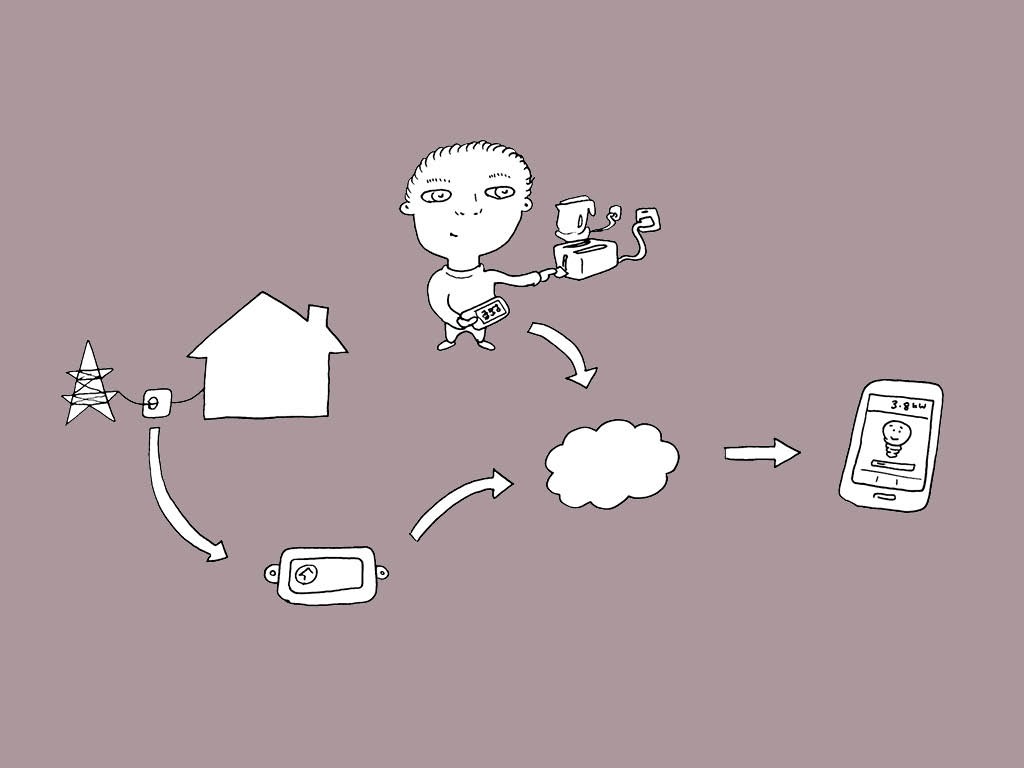

Another example of fixing a high entropy data stream with some extra information is a Canadian startup called Neurio. They took on a problem called disaggregation which has been eluding the major players in electricity monitoring for some time.

Basically to really understand home electricity use you need to know which appliances are using what electricity. Attaching individual monitors to each one is expensive and inconvenient. You ought to be able to do it just by watching the overall house consumption – with a sensor on the main circuit breaker – and recognising the load characteristics of the different appliances but no one has actually managed to implement this reliably in practice yet. After a successful kickstarter Neurio haven’t shipped yet so they may not manage it either but it’s looking good.

Their insight was to supplement the load profile database everyone was using with data collected from the user, who walks around their house with a smartphone app identifying each appliance before switching it on. This gives them the perfect secondary information source to reduce the entropy of the aggregated electricity feed.

Expect them to be copied by all of the established players in the next year.

The next lesson I want to teach you goes back to the spirit of the MessSearch. I made the MessSearch to illustrate how the binding of digital information to physical objects could allow messy situations to exist without the cost to utility that they incur when information and matter are separated.

Basically it allows us to tolerate messier situations without having to tidy up to create the required order.

I think these situations are the killer apps for the internet of things. Situations which are messy but where tidying up is undesirable.

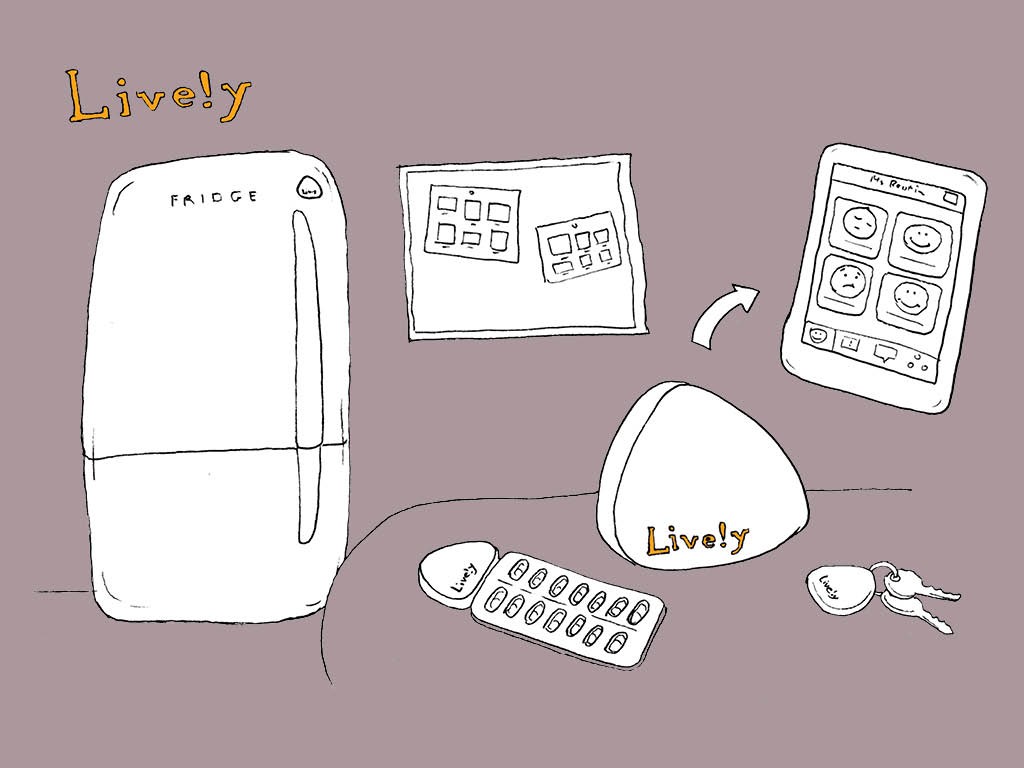

A great example of this is independent living. A person, often of advanced age, could be living on their own with a network of people around them worrying that they are not able to cope. Tidying up in this situation would be moving them into a care home but this is usually not a desirable step for anyone involved. Connected products are able to provide the layer of information that can make a messy situation like this workable.

I’m working on a product in this space but unfortunately we’ve not launched yet so I’m not able to talk about it.

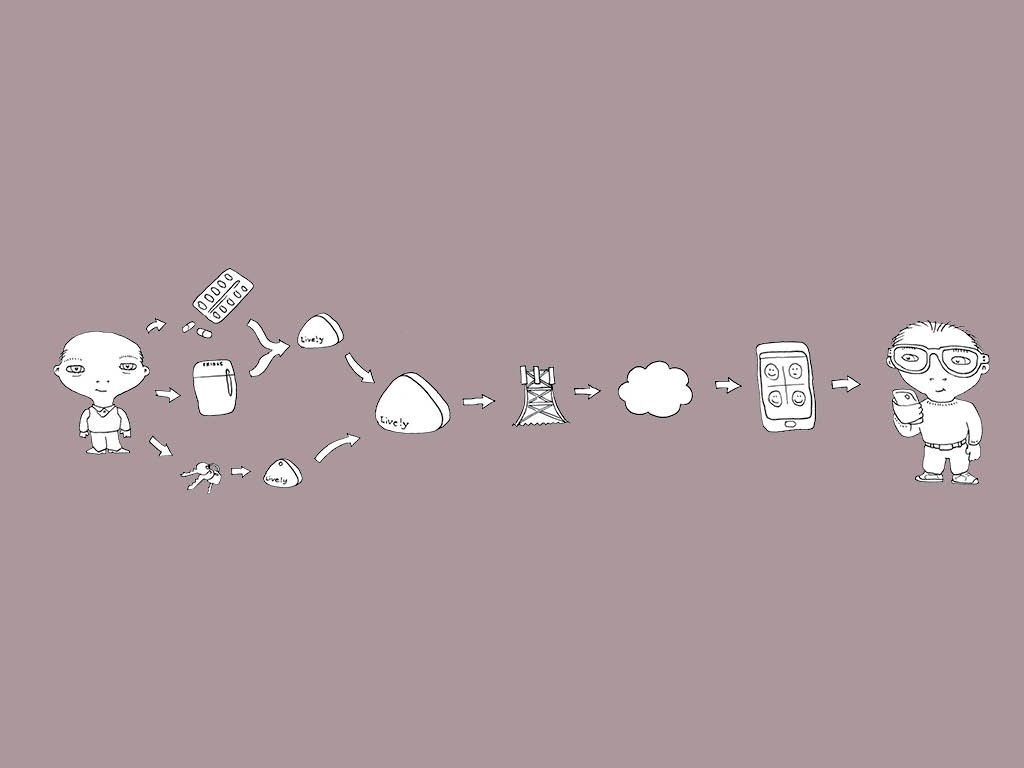

A product that is already out and seemingly doing a great job is Lively. It’s a network of sensors that watches out for a persons day to day activities and then lets their relations know if they have or haven’t occurred.

The key challenge in this space is to create something which collects enough information to be sufficiently reassuring whilst at the same time being acceptable to and not stigmatising the person who is being monitored.

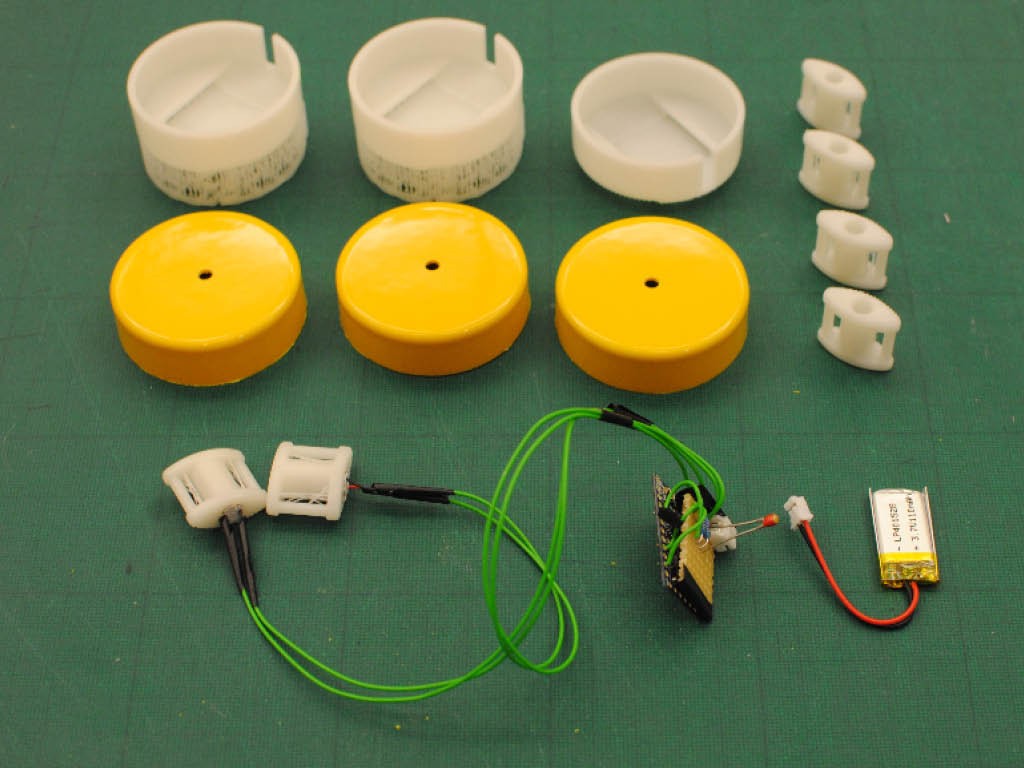

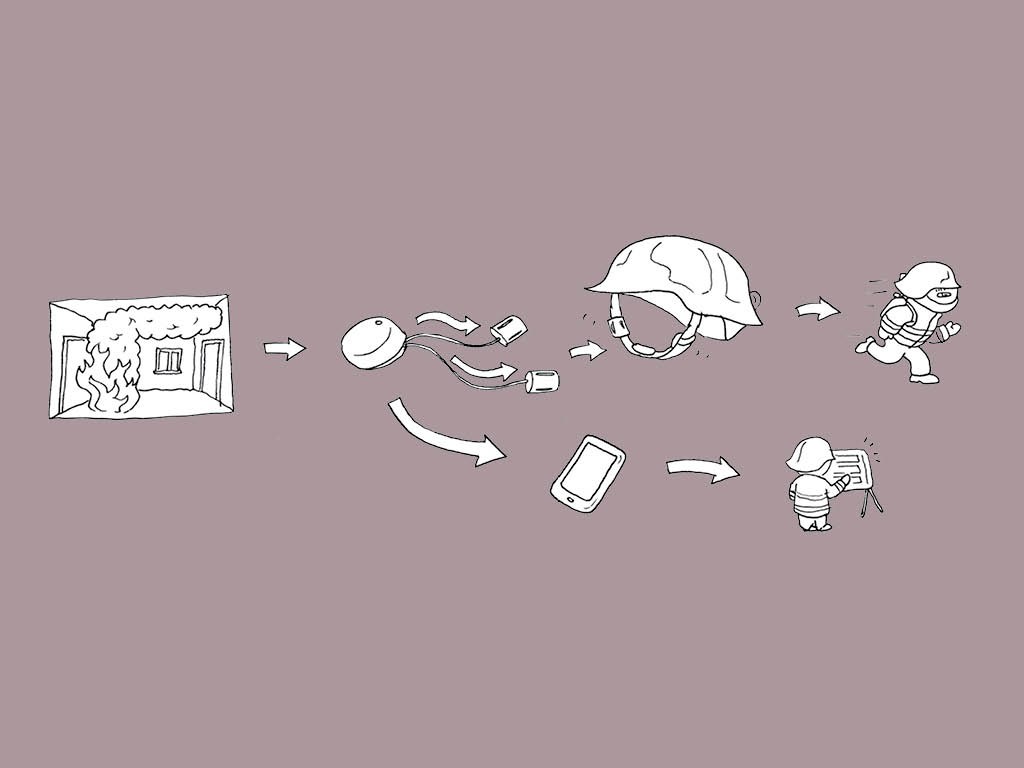

An example of an internet of things project in a really messy situation that I’ve worked on and can talk about is Knry.

Here the situation is firefighters dealing with a blaze in a large building. I started this project at a hackathon at Imperial College London and it was later taken on by a London based accelerator. We were briefed by Hampshire fire and rescue, specifically Mick Johns who had spent the past three years investigating an incident where two firefighters had lost their lives in an apartment building in Southampton.

Mick did the most incredible job of framing the problem that had lead to their deaths. Basically it was caused by the character of the fire changing and the firefighters not realising in time. This was caused by the reduced information available about the temperature around them due to improved insulated clothing.

We built a wearable device that measured the rate of temperature change and provided the firefighters with an unambiguous haptic signal through their helmet straps that they had to get out.

We also fed this data back to the fire controller, further reducing the entropy of the situation.

There are countless more messy situations like these two where internet of things technology can make a genuinely transformative difference by allowing the right sort of order to emerge when required. The technology will genuinely change and in some cases save people’s lives. We really need to start using it to do that, not to create amusing curiosities.

This brings me the last lesson we can learn from thermodynamics.

Before I start I’m going to pose you a question to think about. Why is the internet of things dominated by startups and not more established companies? Why did Google have to pay three point two billion dollars for Nest three years after discontinuing its own smart energy project? Why despite over a decade of pushing their vision of smart homes are German engineering giant Siemens almost invisible in the space? Why was only product I’ve discussed today made by a company that’s existed for more than ten years, the Nike Fuel Band, designed and developed externally by an advertising agency?

The answer lies in the entropy of the design process.

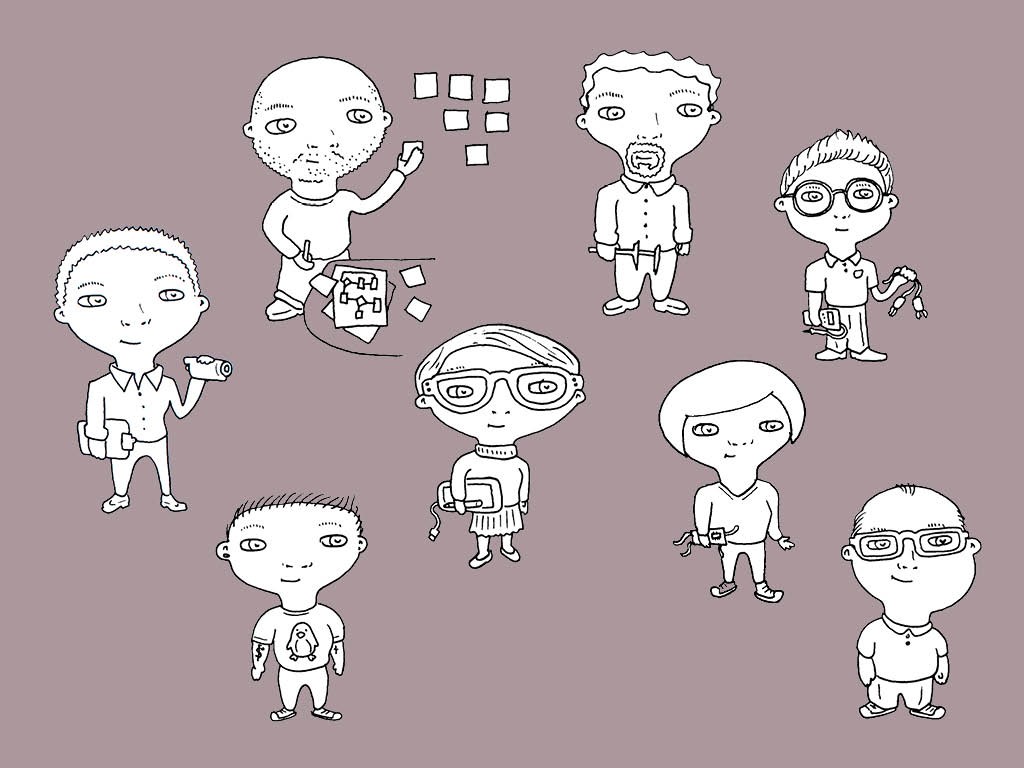

To create the internet of things systems that will solves these really meaningful problems takes a really effective design process.

First of all the problems need to be identified and properly framed.

As they usually involve the messy behavior of humans this requires sensitive and detailed anthropological research. Really getting into the lives of the people who will affect the system and be affected by it.

Next the insights from the research needs to be converted into an overall design concept which needs to be articulated and improved upon. The design concept needs to respond to the nuances of the research but also be deliverable with technology that is either existing or has a realistic chance of being developed within the resources of the project.

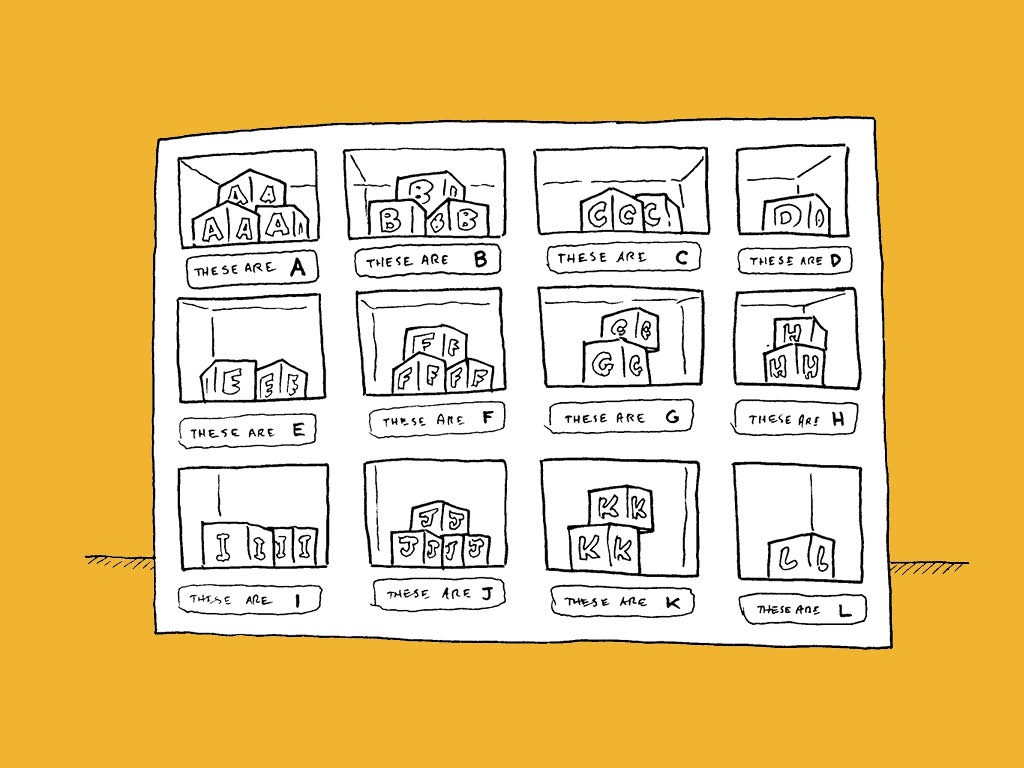

This design concept then needs to be delivered. As it is an internet of things product it will probably require product design, mechanical engineering,

electronic engineering

firmware

user interface design

front and

back end development.

I think you’ve probably appreciated by now the thrust of the second law that processes tend to reduce the quality of information, or increase its entropy. > A classic example of such a process is the handover of information between the different people working on delivering a product. This handover is unavoidable in within both large in-house design and engineering teams and well established agencies – both of whom will have specialists in each of the areas I’ve just described passing a project between themselves.

The problem with handover, particularly the translation of qualitative human insights into designs for products, interfaces and software is that important information can so easily be lost – perhaps because it didn’t seem important to the person handing over.

That is why the startups have such an advantage in this space. The whole user experience of the system including both the research informing it and engineering determining how it is delivered by the technology is owned by one to three people.They are necessarily multifunctional meaning that almost nothing ever needs to be handed over, it’s all in their heads.

The agile crowd have known about this for ages when it comes to straight software development. But when you can just chuck something online and start gathering analytics research is less important.

Agile hardware ends up working out a bit different. You probably don’t want to be randomly mailing a hacked together minimum viable product to thousand’s of people to work out if it’s any good. It’ll be really expensive and as most of them probably won’t manage to plug it in and connect it to their WiFi you won’t even get any analytics back.

I think Agile hardware means a few people do some really sensitive but rigorous anthropological research with people in the context of use, then the same people design some system architecture, hack a minimum viable product and take it back to the users as a probe for further research. Then they iterate until they have something they think is worth developing properly. At this point they bring in some really excellent specialists to deliver a fantastically high quality embodiment.

This is what I understand Agile hardware looks like, how I work in my agency and how I minimise the entropy of our design process to create an overall connected product system that really solves a problem for someone.

If this sounds interesting come up and have a chat or e-mail me at ross@rossatkin.com

Thanks so much for putting up with all of that theoretical thermodynamics, and for coming with me on this journey. And for that last sales pitch. I’ll happily take some questions if anyone has one.